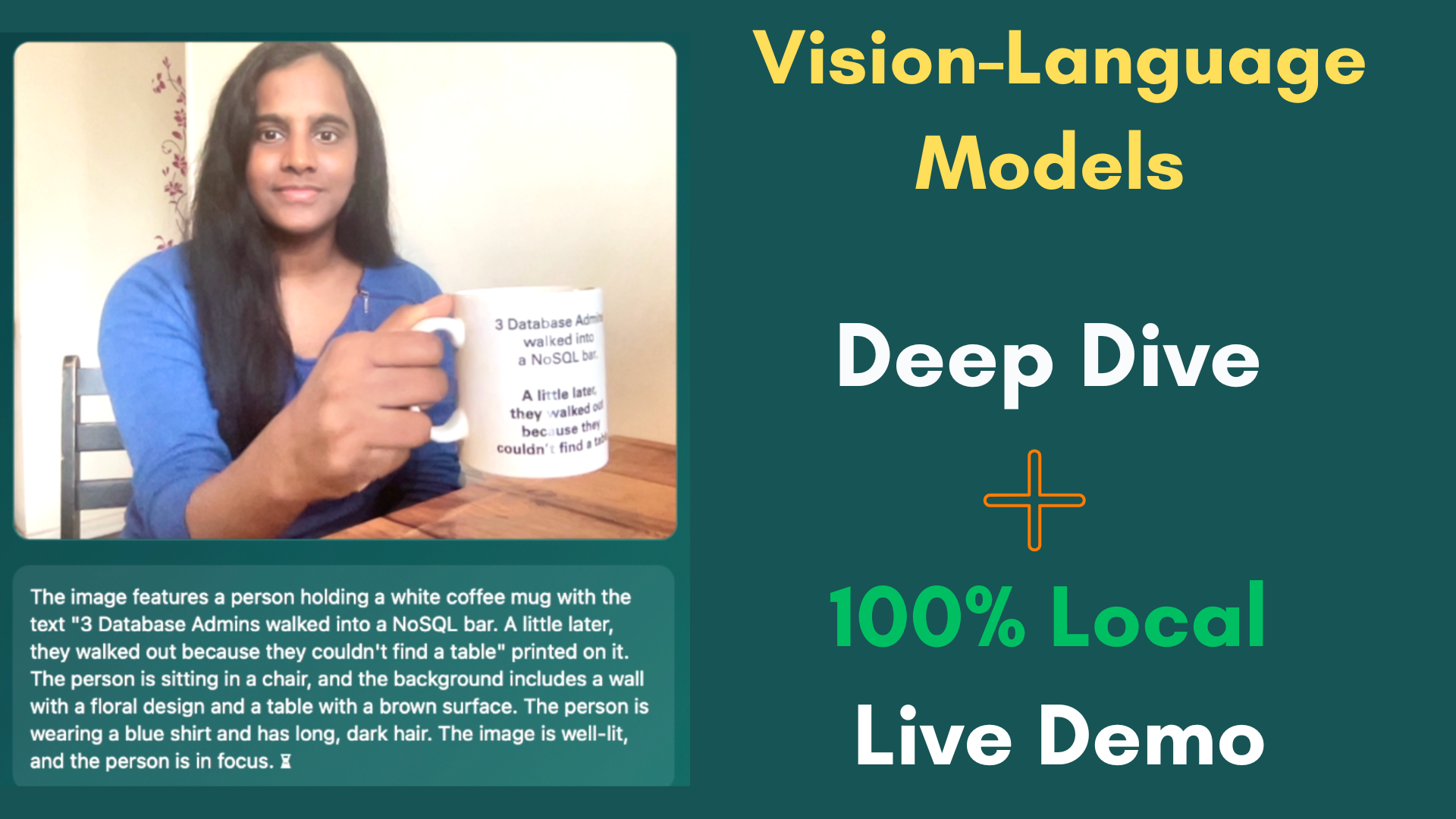

Vision-Language Models - MultimodalAI - From Foundations to a Fully Local SmolVLM Demo

A deep, researcher-level exploration of Vision-Language Models in 2026, covering core architectures, training paradigms, and multimodal fusion mechanisms. The tutorial connects theory to practice through a fully local, real-time live demo system running entirely on-device, demonstrating how modern models unify visual perception and language reasoning without relying on cloud infrastructure. It also includes a SmolVLM-based local captioning demo, where a lightweight Vision-Language Model generates natural language descriptions from live camera input in real time.

Reading_time: 5 min

Tags: [VisionLanguageModels, SmolVLM, #MultimodalAI, AIResearch, MachineLearning, DeepLearning, ComputerVision, NLP, OnDeviceAI, EdgeAI, AIEngineering, LLM, AI2026]

- Vision-Language Models - From Foundations to a Fully Local SmolVLM Demo

Vision-Language Models - From Foundations to a Fully Local SmolVLM Demo

GitHub Repository: vlm-deep-dive-demo

Vision-Language Models (VLMs) represent one of the most important shifts in modern AI: the unification of visual perception and language reasoning into a single multimodal system. Instead of treating images and text as separate domains, VLMs allow models to “see” and “describe” the world using a shared representational space.

This tutorial explores the core ideas behind VLMs, their architectural evolution, training paradigms, and practical implementation through a fully local SmolVLM-based captioning system. The entire demo runs on-device, showing that modern multimodal AI is no longer limited to cloud-scale infrastructure.

Video Structure Overview

- 0:00:00 – Introduction

- 0:01:37 – VLM Architectures, Training Paradigms, and Evolution

- 0:09:52 – Codebase Walkthrough

- 0:16:19 – Live Demo (SmolVLM Local Captioning System)

- 0:17:56 – Conclusion and Key Takeaways

Introduction

Traditional AI systems treated vision and language as separate problems. Computer vision models focused on classification or detection, while language models handled reasoning and text generation. These systems were powerful individually but lacked a unified understanding of multimodal context.

Vision-Language Models change this fundamentally. They enable a single system to interpret images and generate language grounded in visual input, bridging perception and reasoning in one pipeline.

VLM Architectures, Training Paradigms, and Evolution

Modern VLMs are typically built using three core components:

1. Vision Encoder

Most systems use Vision Transformers (ViTs) that split images into patches and convert them into token embeddings. This allows images to be processed similarly to text sequences.

2. Multimodal Fusion Layer

This layer aligns visual tokens with textual tokens. Early approaches used contrastive learning (for example CLIP-style objectives), while modern systems increasingly rely on generative training and instruction tuning.

3. Language Model Decoder

A transformer-based language model generates text conditioned on visual embeddings. This enables tasks such as captioning, visual question answering, and reasoning over images.

Evolution of Training Paradigms

VLM development has gone through several key phases:

- Contrastive learning phase: aligning image-text pairs in embedding space

- Generative phase: directly generating language conditioned on images

- Instruction-tuned phase: training models to follow natural language prompts

- Parameter-efficient adaptation: using methods like LoRA and adapters to integrate vision into large language models efficiently

The key shift is from classification-style outputs to reasoning-oriented generative models.

Codebase Walkthrough

The system in this demo is designed to be lightweight, efficient, and fully local.

Key Components

- Frontend (Browser camera input): captures frames from the webcam and sends periodic snapshots

- Backend (Flask server): handles image reception and preprocessing

- Model layer (SmolVLM): a compact Vision-Language Model optimized for efficient inference

Pipeline Flow

- Capture frame from webcam

- Resize and compress image in browser

- Send image to local Flask backend

- Decode and preprocess image

- Run inference through SmolVLM

- Generate caption token by token

- Return result to UI

This architecture prioritizes simplicity and low latency while remaining fully on-device.

Live Demo: SmolVLM Local Captioning System (0:16:19)

The live demo demonstrates SmolVLM generating captions from a real-time camera feed.

Key Characteristics

- Fully local inference with no cloud dependency

- Real-time image-to-text generation

- Lightweight model optimized for consumer hardware

- Periodic frame processing instead of continuous video streaming

Instead of processing every frame, the system samples snapshots, making inference efficient and stable.

The model interprets each frame as a semantic snapshot and generates a natural language description of the scene.

Why This Design Works

This approach reflects an important principle in modern multimodal AI:

Intelligence does not require continuous perception, but meaningful interpretation.

By treating each frame as a discrete observation, the system mimics human-like perception: observe, interpret, describe.

Conclusion

Vision-Language Models represent a major step toward unified multimodal intelligence. They eliminate the separation between seeing and speaking by embedding both modalities into a shared reasoning framework.

This demo shows that:

- VLMs can run fully locally

- Real-time captioning is feasible on consumer hardware

- Efficient architectures like SmolVLM make deployment practical

- Multimodal AI is shifting from research to usable systems

Happy reading! 📖✨

Thanks for Reading!

Website/Newletter AIMagazine Substack

Connect with me on Linkedin

Find me on Github

Visit my technical channel on Youtube

Support: Buy me a Cofee/Chai